Instalação do CAAS 4.0 para CAP

Infraestrutura:

Servidores:

| Balanceador | ||

| Administração | ||

| Master 01 | ||

| Master 02 | ||

| Master 03 | ||

| Worker 01 | ||

| Worker 02 | ||

| Worker 03 |

Preparando maquina modelo para o CAAS

zypper in vim

zypper in sudo

zypper in iputils

zypper install insserv-compat

zypper in nfs-client nfs-utils yast2-nfs-client

zypper se patterns | grep caasp

grep "swapaccount=1" /etc/default/grub || sudo sed -i -r 's|^(GRUB_CMDLINE_LINUX_DEFAULT=)\"(.*.)\"|\1\"\2 swapaccount=1 \"|' /etc/default/grub

sudo grub2-mkconfig -o /boot/grub2/grub.cfg

sudo systemctl reboot

echo "admin ALL=(ALL) NOPASSWD: ALL" >> /etc/sudoers

rm /etc/machine-id /var/lib/zypp/AnonymousUniqueId /var/lib/systemd/random-seed /var/lib/dbus/machine-id /var/lib/wicked/*

snapper list

snapper delete 3 4 5 6

snapper list

vim /etc/sysconfig/network/ifcfg-eth0

hostnamectl set-hostname sucaasadmhm01

reboot

zypper in -t pattern SUSE-CaaSP-Management

shutdown -h now

Ajustes nos clones

vim /etc/sysconfig/network/ifcfg-eth0

hostnamectl set-hostname nome-do-clone

Instalando VMwareTools

cd /opt/

zypper in wget insserv-compat

wget http://sumanager01/pub/repositories/VMwareTools-10.3.20/VMwareTools-10.3.20-14389227.tar.gz

tar -zxvf VMwareTools-10.3.20-14389227.tar.gz

cd vmware-tools-distrib/

./vmware-install.pl

cd ..

rm -rf *

exit

Preparando balanceador Nginx para servidores "Masters"

Adicionar repositórios para o nginx.

zypper ar --type yum --refresh --check http://nginx.org/packages/sles/15/ nginx-stable

zypper addrepo --type yum --refresh --check http://nginx.org/packages/mainline/sles/15/ nginx-mainline

zypper in nginx nginx-module-geoip nginx-module-image-filter nginx-module-njs nginx-module-perl nginx-module-xslt nginx-source

***Obs.: Ativar stream_tcp na instalação do nginx

Criar arquivo levando em consideração o nome dos master, com a seguinte configuração.

sucaasbalpd01:/etc/nginx # cat tcpconf.d/stream.conf

error_log /var/log/nginx/stream-root_error.log;

stream {

log_format proxy '$remote_addr [$time_local] '

'$protocol $status $bytes_sent $bytes_received '

'$session_time "$upstream_addr"';

error_log /var/log/nginx/k8s-masters-lb-error.log;

access_log /var/log/nginx/k8s-masters-lb-access.log proxy;

upstream k8s-masters {

#hash $remote_addr consistent;

server sucaaskmnhm01:6443 weight=1 max_fails=1;

server sucaaskmnhm02:6443 weight=1 max_fails=1;

server sucaaskmnpd03:6443 weight=1 max_fails=1;

}

server {

listen 6443;

proxy_connect_timeout 1s;

proxy_timeout 3s;

proxy_pass k8s-masters;

}

upstream dex-backends {

#hash $remote_addr consistent;

server sucaaskmnhm01:32000 weight=1 max_fails=1;

server sucaaskmnhm02:32000 weight=1 max_fails=1;

server sucaaskmnhm03:32000 weight=1 max_fails=1;

}

server {

listen 32000;

proxy_connect_timeout 1s;

proxy_timeout 3s;

proxy_pass dex-backends;

}

upstream gangway-backends {

#hash $remote_addr consistent;

server sucaaskmnhm01:32001 weight=1 max_fails=1;

server sucaaskmnhm02:32001 weight=1 max_fails=1;

server sucaaskmnhm03:32001 weight=1 max_fails=1;

}

server {

listen 32001;

proxy_connect_timeout 1s;

proxy_timeout 3s;

proxy_pass gangway-backends;

}

}

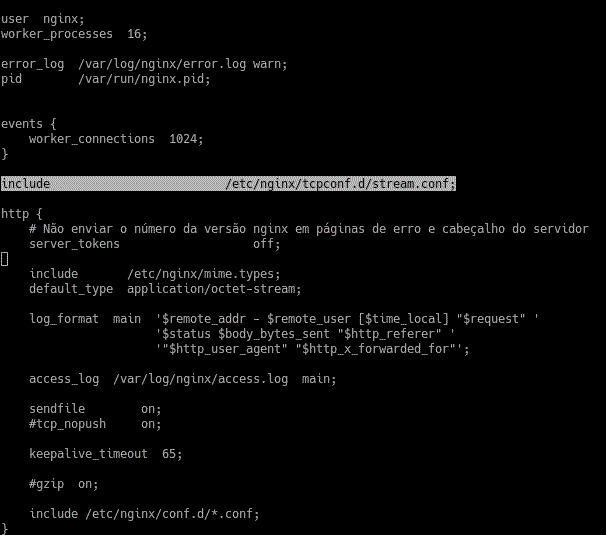

configurar o arquivo nginx.conf para incluir o novo arquivo criado

include /etc/nginx/tcpconf.d/stream.conf;

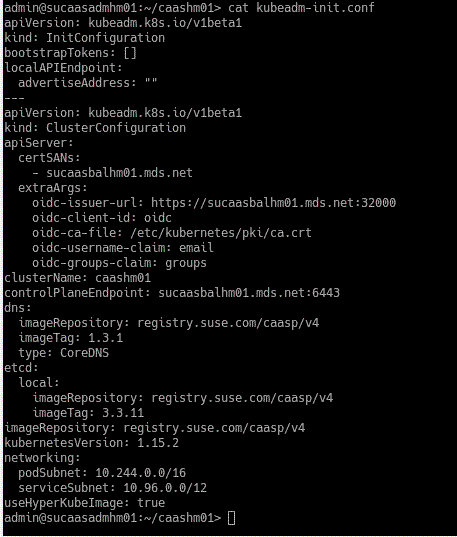

Preparando a configuração do cluster (após master e workes configurados)

***Obs.: Usar usuário admin, não usar root!!!

***Obs.:

# em cluster com mais de 1 master colocar o endereço do nginx conforme configurações acima

# sem cluster

skuba cluster init --control-plane sucaaskmnhm01 caashm01

#com cluster

skuba cluster init --control-plane sucaasbalhm01 caashm01

cd /home/admin/caashm01

cat kubeadm-init.conf

ssh-keygen -t rsa -b 4096

eval `ssh-agent`

ssh-add

ssh-copy-id sucaaskmnhm01

ssh-copy-id sucaaskmnhm02

ssh-copy-id sucaaskmnhm03

ssh-copy-id sucaaskwnhm01

ssh-copy-id sucaaskwnhm02

ssh-copy-id sucaaskwnhm03

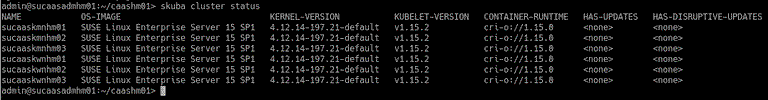

skuba -v 9 node bootstrap --user admin --sudo --target sucaaskmnhm01 sucaaskmnhm01

***Obs.: Em ambientes com SSL inspect no firewall a instalação pode dar errado, devido falha de acesso aos repositorios.

skuba -v 9 node join --role master --user admin --sudo --target sucaaskmnhm02 sucaaskmnhm02

skuba -v 9 node join --role master --user admin --sudo --target sucaaskmnhm03 sucaaskmnhm03

skuba -v 9 node join --role worker --user admin --sudo --target sucaaskwnhm01 sucaaskwnhm01

skuba -v 9 node join --role worker --user admin --sudo --target sucaaskwnhm02 sucaaskwnhm02

skuba -v 9 node join --role worker --user admin --sudo --target sucaaskwnhm03 sucaaskwnhm03

skuba cluster status

Preparando ".config" do kubernets

mkdir /home/admin/.kube

cp /home/admin/caashm01/admin.conf /home/admin/.kube/config

cd /home/admin/.kube/

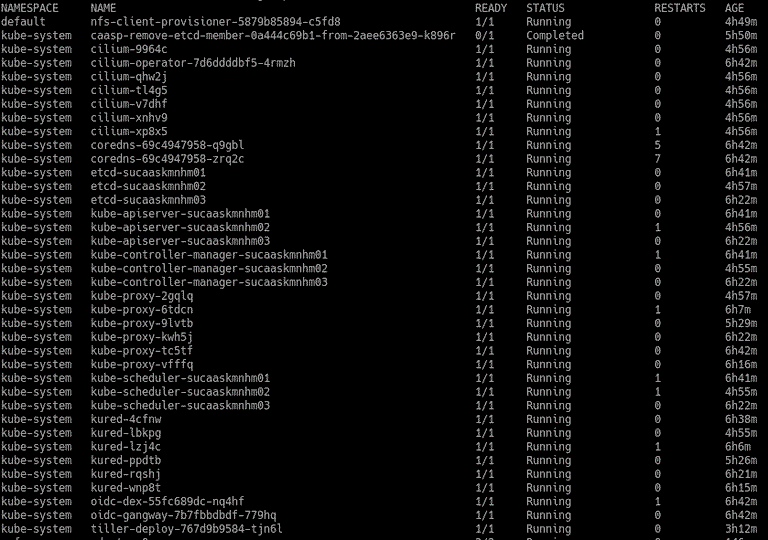

kubectl get nodes

kubectl get pods --all-namespaces

Configurando NFS Storage do Kubernets

sudo zypper in git-core

cd /home/admin/caashml01

git clone https://github.com/neuhauss/external-storage

cd external-storage/

cd nfs-client/

cd deploy/

vim class.yaml

***Obs.: Ajustar nome do nfs

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-hml

provisioner: fuseim.pri/ifs # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "false"

vim deployment.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

---

kind: Deployment

apiVersion: extensions/v1beta1

metadata:

name: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

# definir a verao ex. v3.1.0-k8s1.11

# image: quay.io/external_storage/nfs-client-provisioner:v3.1.0-k8s1.11

image: quay.io/external_storage/nfs-client-provisioner:latest

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 10.222.18.13

- name: NFS_PATH

value: /VOL_DOCKER_HOM

volumes:

- name: nfs-client-root

nfs:

server: 10.222.18.13

path: /VOL_DOCKER_HOM

kubectl create -f class.yaml

storageclass.storage.k8s.io/nfs created

kubectl create -f rbac.yaml

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner created

role.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

rolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

kubectl create -f deployment.yaml

deployment.extensions/nfs-client-provisioner created

Definindo a storage class como default

kubectl patch storageclass nfs-hml -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

Configurando o tiller

kubectl create clusterrolebinding tiller --clusterrole=cluster-admin --serviceaccount=kube-system:tiller

clusterrolebinding.rbac.authorization.k8s.io/tiller created

Iniciando o service tiller

helm init --service-account tiller

$HELM_HOME has been configured at /home/admin/.helm.

Tiller (the Helm server-side component) has been installed into your Kubernetes Cluster.

Please note: by default, Tiller is deployed with an insecure 'allow unauthenticated users' policy.

To prevent this, run `helm init` with the --tiller-tls-verify flag.

For more information on securing your installation see: https://docs.helm.sh/using_helm/#securing-your-helm-installation

Verificando se os pods estão sendo criados

kubectl get pods -A

kubectl get deployments -A

***Obs.: em caso de erro deletar o pod

Instalando SCF

*** Observação a 2.17.1 do scf tem o CF 1.4.1 que ainda suporta cflinuxsf2 necessário para o bulidpack do php5.6

*** a versão 2.18.1 do scf tem o CF 1.5.1 com diversas correções do HA do cluster, mas não suporta mais cflinuxfs2, apenas o 3 que não é suportado pelo buildpack do php5.6

*** instalar o scf em cluster com sizing maior do que o padrão, se mostrou instavel em todos os testes realizados com simulação de falha

*** e necessário editar o deployment do nfs e adicionar uma instancia para cada work

Obs.: definir o storage class como default

kubectl patch storageclass nfs-hml -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-defaultclass":"true"}}}'

ou

kubectl patch storageclass managed-nfs-storage -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-defaultclass":"true"}}}'

*** Obs.: adicionar os canais do helm da suse se

*** helm hepo add suse http://kubernets-charts.suse.com/

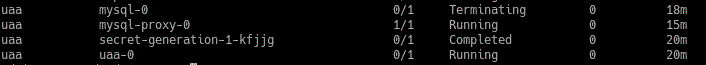

helm install suse/uaa --name uaa --namespace uaa --values scf.config-values.yaml --version 2.17.1

*esperar os pods iniciarem.

SECRET=$(kubectl get pods --namespace uaa \--output jsonpath='{.items[?(.metadata.name=="uaa-0")].spec.containers[?(.name=="uaa")].env[?(.name=="INTERNAL_CA_CERT")].valueFrom.secretKeyRef.name}')

CA_CERT="$(kubectl get secret $SECRET --namespace uaa --output jsonpath="{.data['internal-ca-cert']}" | base64 --decode -)"

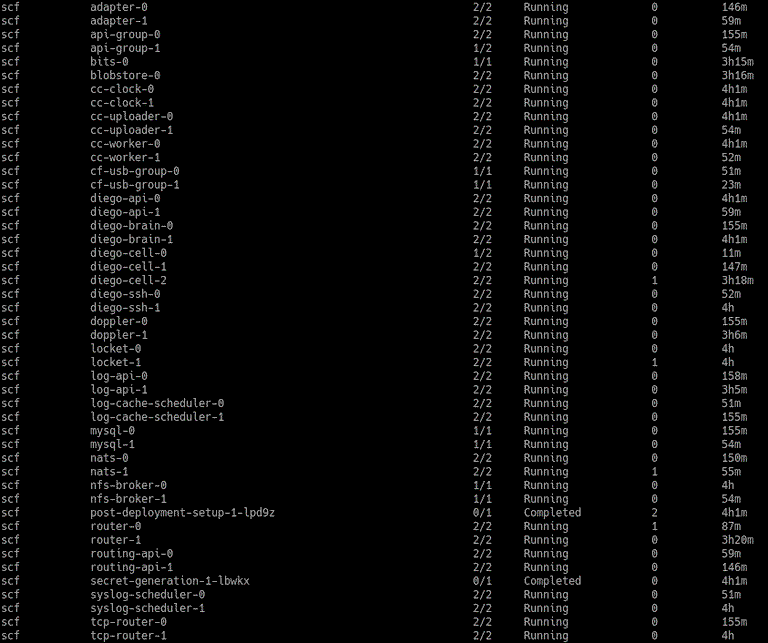

helm install suse/cf --name scf --namespace scf --values scf.config-values.yaml --set "secrets.UAA_CA_CERT=${CA_CERT}" --version 2.17.1

*scf api-group-0 1/2 CrashLoopBackOff é causado pelo pod bits-0

*kubectl delete pod -n scf bits-0

Instalando a console do Stratus

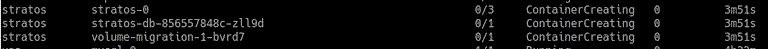

helm install suse/console --name console --namespace stratos --values scf.config-values.yaml --set console.techPreview=true

Instalando o serviço de monitoramento

criar o arquivo stratos-metrics-values.yaml

env:

DOPPLER_PORT: 443

kubernetes:

authEndpoint: https://api.caphml

prometheus:

kubeStateMetrics:

enabled: true

nginx:

username: admin

password: password

useLb: true

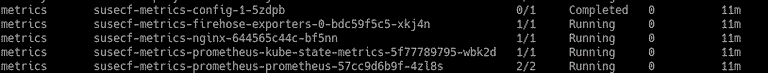

helm install suse/metrics --name susecf-metrics --namespace metrics --values scf.config-values.yaml --values stratos-metrics-values.yaml

Instalando CF-CLI (Clound Foundry Command Line Interface)

SUSEConnect --product sle-module-cap-tools/15.1/x86_64

zypper in cf-cli

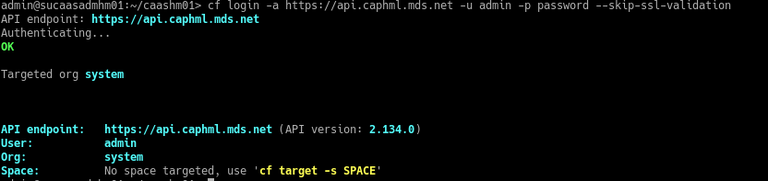

cf login -a https://api.caphml -u admin -p password --skip-ssl-validation

ou

cf login -a https://api.capprd -u admin -p password --skip-ssl-validation -o SEI_ESP_HML -s LAB

Debugando CF

export CF_TRACE=true

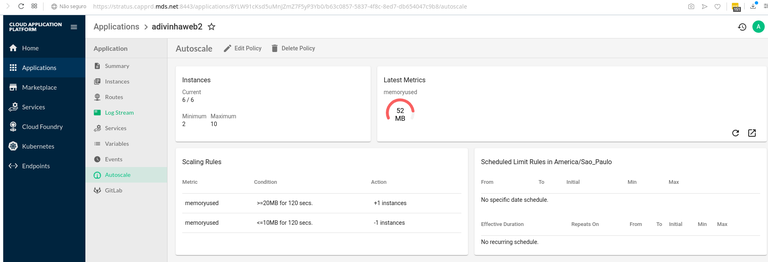

cf pushHabilitando Autoscale

cf install-plugin app-autoscaler-plugin

SECRET=$(kubectl get pods --namespace uaa \--output jsonpath='{.items[?(.metadata.name=="uaa-0")].spec.containers[?(.name=="uaa")].env[?(.name=="INTERNAL_CA_CERT")].valueFrom.secretKeyRef.name}')

CA_CERT="$(kubectl get secret $SECRET --namespace uaa --output jsonpath="{.data['internal-ca-cert']}" | base64 --decode -)"

helm upgrade scf suse/cf --values scf.config-values.yaml --set "secrets.UAA_CA_CERT=${CA_CERT}" --set "enable.autoscaler=true"

Habilitando Metrícas

criar o arquivo: stratos-metrics-values.yaml

env:

DOPPLER_PORT: 443

kubernetes:

authEndpoint: https://api.capprd

prometheus:

kubeStateMetrics:

enabled: true

nginx:

username: admin

password: password

useLb: true

Executar os comandos:

helm install suse/metrics --name susecf-metrics --namespace metrics --values scf.config-values.yaml --values stratos-metrics-values.yaml

watch --color 'kubectl get pods --namespace metrics'

Habilitando Broker

SECRET=$(kubectl get pods --namespace uaa --output jsonpath='{.items[?(.metadata.name=="uaa-0")].spec.containers[?(.name=="uaa")].env[?(.name=="INTERNAL_CA_CERT")].valueFrom.secretKeyRef.name}')

CA_CERT="$(kubectl get secret $SECRET --namespace uaa --output jsonpath="{.data['internal-ca-cert']}" | base64 --decode -)"

helm upgrade scf suse/cf --values scf.config-values.yaml --set "secrets.UAA_CA_CERT=${CA_CERT}" --set "enable.cf_usb=true"

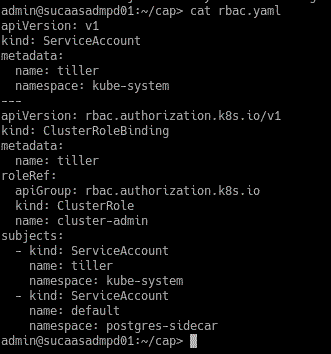

Adicionar ao arquivo rbac.yalm

atual:

apiVersion: v1

kind: ServiceAccount

metadata:

name: tiller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: tiller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: tiller

namespace: kube-system

Adicionar ao final:

- kind: ServiceAccount

name: default

namespace: postgres-sidecar

Executar o comando:

kubectl apply -f rbac.yaml

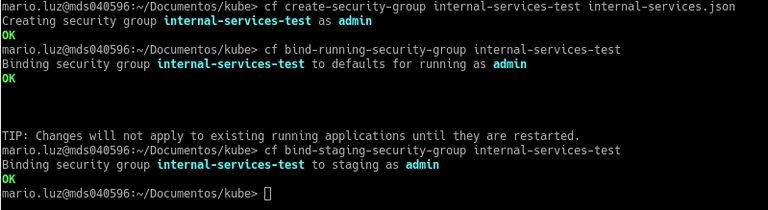

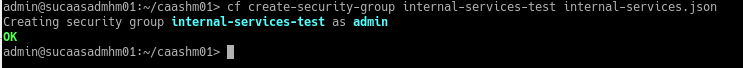

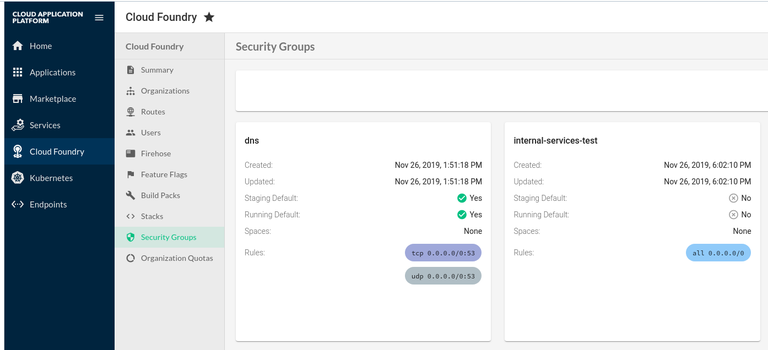

Habilitando a comunicação de serviços internos:

echo > "internal-services.json" '[{ "destination": "0.0.0.0/0", "protocol":"all" }]'

cf create-security-group internal-services internal-services.json

cf bind-running-security-group internal-services

cf bind-staging-security-group internal-services

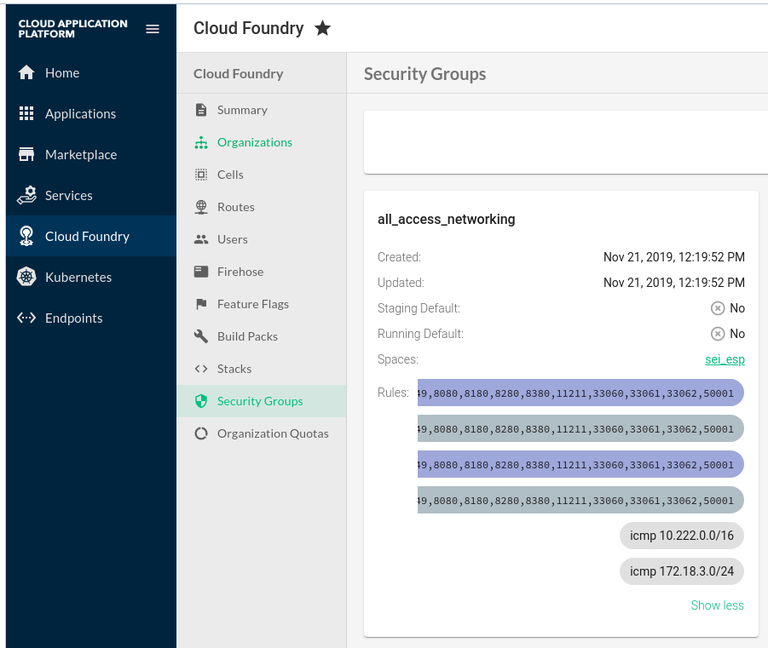

Criando serviço de rede para mapeamento de portas:

vim network_all.json

[

{ "protocol": "tcp", "destination": "10.222.0.0/16", "ports": "22,25,53,80,123,389,443,587,636,1521,2049,3268,3269,3306,5432,6446,6447,6448,6449,8080,8180,8280,8380,11211,33060,33061,33062,50001", "description": "Allow All Network TCP Traffic" },

{ "protocol": "udp", "destination": "10.222.0.0/16", "ports": "22,25,53,80,123,389,443,587,636,1521,2049,3268,3269,3306,5432,6446,6447,6448,6449,8080,8180,8280,8380,11211,33060,33061,33062,50001", "description": "Allow All Network UDP Traffic" },

{ "protocol": "tcp", "destination": "172.18.3.0/24", "ports": "22,25,53,80,123,389,443,587,636,1521,2049,3268,3269,3306,5432,6446,6447,6448,6449,8080,8180,8280,8380,11211,33060,33061,33062,50001", "description": "Allow All Network TCP Traffic" },

{ "protocol": "udp", "destination": "172.18.3.0/24", "ports": "22,25,53,80,123,389,443,587,636,1521,2049,3268,3269,3306,5432,6446,6447,6448,6449,8080,8180,8280,8380,11211,33060,33061,33062,50001", "description": "Allow All Network UDP Traffic" },

{ "protocol": "icmp", "destination": "10.222.0.0/16", "code": -1, "type": -1, "description": "Allow All Network ICMP Traffic" },

{ "protocol": "icmp", "destination": "172.18.3.0/24", "code": -1, "type": -1, "description": "Allow All Network ICMP Traffic" }

]

cf create-security-group all_access_networking network_all.json

cf bind-security-group all_access_networking LAB sei_esp

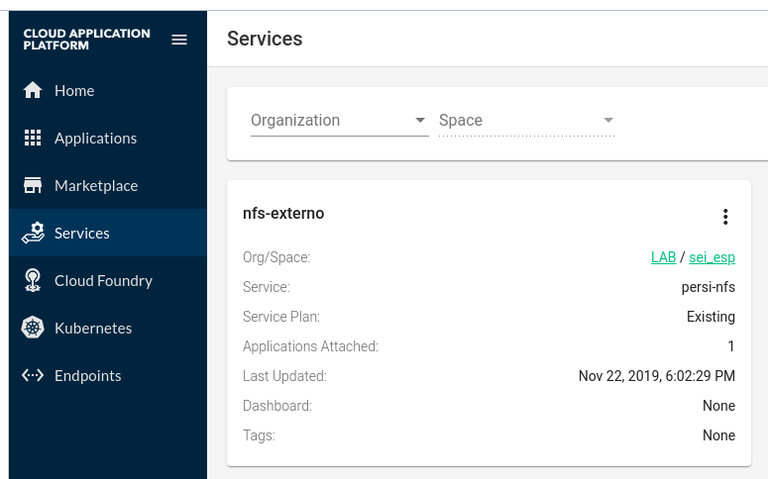

Criando serviço de NFS para mapeamento de compartilhamento nfs

cf enable-service-access persi-nfs

cf create-service nfs-externo

cf create-service persi-nfs Existing nfs-externo -c '{"share": "suarqvpd01/opt/cap_files"}'

cf bind-service cf-ex-php-info nfs-externo -c '{"uid":"1000","gid":"1000","mount":"/home/vcap/app/cap_files"}'

cf restage cf-ex-php-info

Configurando Authenticação AD Microsoft

Autenticando no Cluster:

uaac.ruby2.5 target --skip-ssl-validation https://uaa.capprd:2793

uaac.ruby2.5 token client get admin --secret password

Exibe os providers de autenticação:

uaac.ruby2.5 curl /identity-providers --insecure --header "X-Identity-Zone-Id: scf"

Adicionar o provider de autenticação AD:

uaac.ruby2.5 curl /identity-providers?rawConfig=true \

--request POST \

--insecure \

--header 'Content-Type: application/json' \

--header 'X-Identity-Zone-Subdomain: scf' \

--data '{

"type" : "ldap",

"config" : {

"ldapProfileFile" : "ldap/ldap-search-and-bind.xml",

"baseUrl" : "ldap://10.222.10.61:389",

"bindUserDn" : "CN=LDAP Search,OU=SERVICOS,OU=ADMINISTRATIVOS,OU=USUARIOS,OU=XXXXXX_NOVO,DC=XXXXXX,DC=net",

"bindPassword" : "ldap123",

"userSearchBase" : "OU=USUARIOS,OU=XXXXXX_NOVO,DC=XXXXXX,DC=net",

"userSearchFilter" : "sAMAccountName={0}",

"ldapGroupFile" : "ldap/ldap-groups-map-to-scopes.xml",

"groupSearchBase" : "dc=XXXXXX,dc=net",

"groupSearchFilter" : "(objectCategory=group)"

},

"originKey" : "ldap",

"name" : "XXXXXX_AD",

"active" : true

}'

Deleta um provider de autenticação

uaac.ruby2.5 curl /identity-providers/1deb966f-4638-4132-a760-adfb3bb9174b --request DELETE --insecure --header "X-Identity-Zone-Id:scf"

Criando usuário e setando permissões em uma organização:

cf create-user mario.luz --origin ldap

cf set-space-role mario.luz XXXXXX dev SpaceDeveloper

cf set-org-role mario.luz XXXXXX OrgManager

cf create-user luciano.bolonheis --origin ldap

cf set-space-role luciano.bolonheis XXXXXX dev SpaceDeveloper

cf set-org-role luciano.bolonheis XXXXXX OrgManager

Adicionando BuildPacks

PHP5.6 com suporte a stack SLES12

mkdir php5-v4.3

cd php5-v4.3wget https://cf-buildpacks.suse.com/php-buildpack-v4.3.70.2-3.1-f3d5f682.zip

unzip php-buildpack-v4.3.70.2-3.1-f3d5f682.zip

cf create-buildpack php5-v4.3 php5-v4.3/ 1

Instalando Plugins

Plugin de backup/restore

Fonte: https://github.com/SUSE/cf-plugin-backup

-

Download the plugin from https://github.com/SUSE/cf-plugin-backup/releases

-

Install using cf install-plugin:

cf install-plugin <backup-restore-binary